Blog

Why Insurance Companies Need Private AI + LLM Underwriting

AI is already reshaping insurance, though not always in obvious ways. It shows up in pricing, claims, and even customer support. Most insurers have started experimenting; some are scaling it quietly, At the same time, large language models have changed what’s possible. Not just automation but understanding. Documents, emails, reports. The messy stuff.

This is where things shift.

As useful as AI is, insurance runs on sensitive data. Customer profiles, medical, and financial records. Putting that into public systems is not as straightforward as it sounds. Which is why private AI and LLM underwriting are starting to move from “nice to have” to something closer to essential.

Overview of AI Adoption in Insurance

Most insurers didn’t jump into AI all at once. It started with small use cases. Fraud detection. Basic automation. Maybe some predictive analytics are layered on top.

Now it’s deeper.

AI is being used to speed up claims, improve pricing models, and reduce operational load. In many cases, it works well. But it also exposes gaps. Especially when systems can’t communicate with each other or when decisions still depend heavily on manual review.

That’s the friction point, where AI Is Already Being Used

|

Area |

What AI Does | Impact |

| Claims Processing | Automates validation and routing | Faster settlements |

| Fraud Detection | Identifies anomalies and patterns | Reduced fraud losses |

| Pricing | Improves risk-based pricing models | Better profitability |

| Customer Support | Chatbots and automation |

Faster responses |

Emergence of Large Language Models (LLMs)

LLMs changed the conversation because they handle language, not just numbers underwriting has always depended on documents. Long ones. Financial statements, inspection reports, policy histories. Humans read them, slowly LLMs don’t.

They scan, interpret, summarise. More importantly, they connect context across documents. Something traditional systems struggle with.

This sounds obvious. It usually isn’t.

Traditional Systems vs LLMs

|

Capability |

Traditional Systems |

LLMs |

|

Structured Data |

Strong | Strong |

|

Unstructured Data |

Limited | Very strong |

| Context Understanding | Basic |

Advanced |

| Speed | Moderate |

High |

Need for Secure, Private AI Environments

Here’s the catch.

Most powerful AI tools today run on shared infrastructure. Data goes out, gets processed, and comes back. That works fine for generic tasks.

Insurance is not a generic task.

Data leakage, compliance risks, and unclear data ownership. These are not edge cases. They’re everyday concerns. More often than not, this is where adoption slows down. Which is why private environments matter.

Enkefalos GenAI Foundry™ delivers full control – see the difference

|

Factor |

Public AI |

Private AI |

|

Data Control |

Limited | Full control |

| Compliance | Uncertain |

Easier to manage |

|

Security |

Shared risk | Controlled environment |

| Customisation | Limited |

High |

Understanding Why Insurance Companies Need Private AI and LLM Underwriting

What Private AI Platforms Are

Private AI basically means running AI within your own controlled setup. That could be on-premises, private clouds, or a tightly controlled environment.

Nothing leaves unless you want it to.

It gives insurers control over:

- Data access

- Model behaviour

- Security layers

And control matters more than capability in this space.

What LLM Underwriting Means in Insurance

LLM underwriting is simpler than it sounds.

Instead of underwriters manually going through every document, LLMs handle the first layer. They read, extract key details, and organise everything.

Not perfect. But fast.

Underwriters then step in with a better context instead of starting from scratch. That changes the nature of the job.

Less reading. More decision-making.

How Both Technologies Work Together

On their own, both do useful things. But that’s not really the point.

The real shift happens when they’re combined.

Private AI keeps everything inside controlled systems. LLMs handle the messy part, which is understanding documents and context. One brings control. The other brings capability.

Put them together, and the workflow starts to feel different.

Documents come in, usually in mixed formats. The model reads them, pulls out what matters, and passes structured data into internal systems. No back-and-forth with external tools. No uncertainty about where the data is going.

That’s what makes this setup actually usable in real environments, not just in demos.

Combined Workflow View

|

Step |

What Actually Happens |

Outcome |

|

Data Intake |

Files come in from multiple sources | Everything stays internal |

|

Processing |

LLM reads and organises content | Usable structured data |

| Evaluation | Internal models assess risk |

Faster decision cycles |

| Output | Results stored and logged |

Full visibility and control |

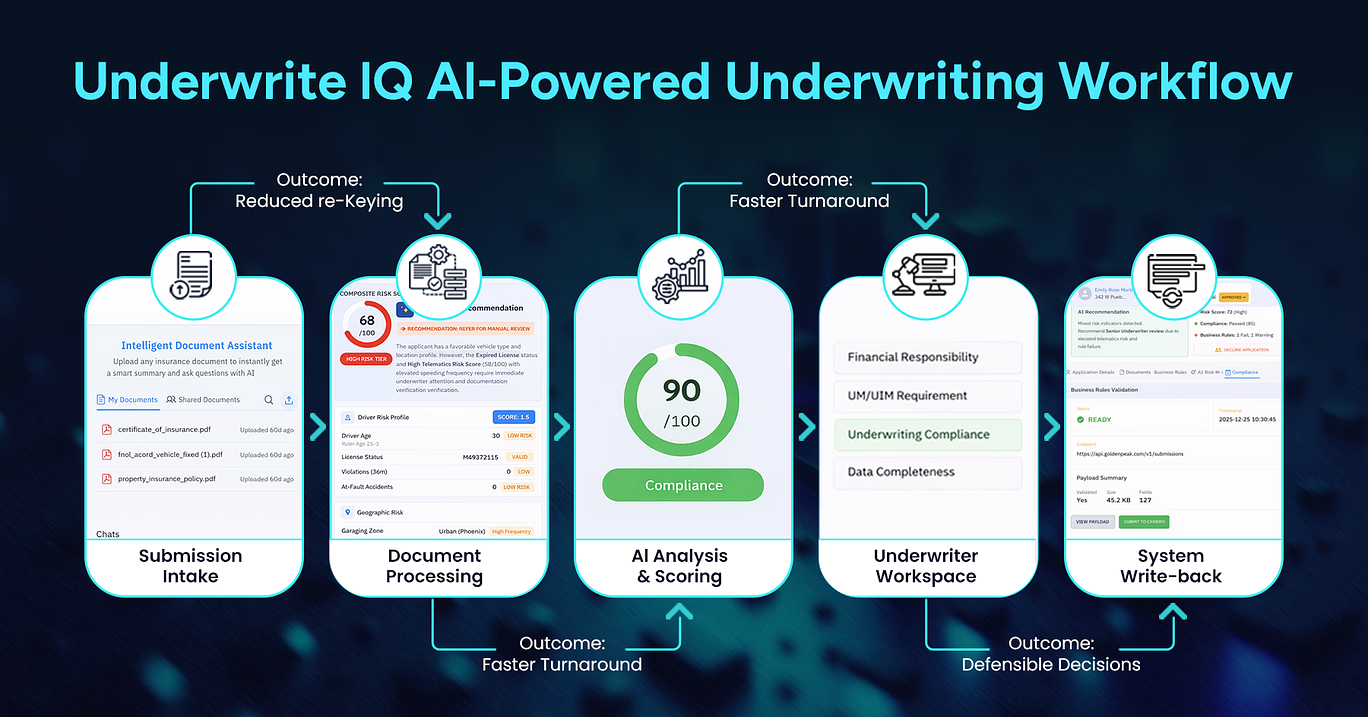

Enkefalos GenAI Foundry™ LLM underwriting workflow: From submission to API write-back – all private, controlled, compliant.

Private AI platforms can be scaled according to organizational needs. Whether expanding to new geographies or adding new product lines, these systems can adapt without compromising control.

Challenges in Traditional Underwriting

1. Manual and Time-Consuming Processes

A lot of underwriting still comes down to reading.

Documents, attachments, notes, and sometimes even scanned files that aren’t easy to work with. Then comes cross-checking, verifying, and going back and forth when something is missing.

It adds up.

Even a fairly standard case can take longer than expected. Not because it’s complex, but because the process is.

2. Inconsistent Risk Assessment

Two underwriters can look at the same case and come to slightly different conclusions. It happens more often than expected.

That inconsistency affects pricing, approvals, and overall risk of exposure.

3. Data Silos and Limited Insights

Data exists, but not in one place.

Claims data sits somewhere. Customer data is somewhere else. Underwriting records in another system entirely. Connecting all of it is not easy.

So, decisions are made with partial visibility.

4. Traditional Underwriting Gaps

|

Issue |

What It Leads To |

|

Manual workflows |

Slower decisions than necessary |

|

Inconsistent judgment |

Variation in pricing and approvals |

| Disconnected data |

Gaps in overall risk visibility |

Why Private AI Is Critical for Insurance

1. Handling Sensitive Customer and Financial Data

This is where things get serious.

Insurance data isn’t just another dataset. It includes identity details, financial records, and sometimes medical history. Even small exposure risks can create bigger problems later.

Most teams already know this, but it tends to get underestimated during AI adoption.

Private AI reduces that uncertainty. Data stays where it’s supposed to stay.

2. Ensuring Regulatory Compliance

Regulations are only getting stricter.

Different regions have different rules. Data residency, auditability, and consent. It’s a lot to manage. Public AI systems don’t always align neatly with these requirements.

Private setups give insurers more control over compliance.

Maintaining Full Control Over Data and Models

There’s also the question of transparency.

With private AI, insurers can monitor how models behave, adjust them, and audit decisions. That level of visibility matters when decisions impact policyholders directly.

Black-box systems don’t work well here.

Why Private AI Matters

|

Requirement |

What It Actually Solves |

|

Data Privacy |

Limits exposure risks |

| Compliance |

Keeps systems aligned with regulations |

|

Control |

Makes monitoring and auditing possible |

Role of LLMs in Underwriting Transformation

1. Automating Document Analysis

This is usually the first place where teams see real impact.

Instead of someone manually going through pages of information, the model does a first pass. It identifies key fields, highlights relevant sections, and removes a lot of repetitive effort.

Not perfect, but it cuts down a big chunk of the workload.

2. Extracting Insights from Unstructured Data

Most insurance data isn’t neatly structured.

It’s buried in reports, emails, and scanned documents. LLMs pull useful information out of that mess and make it usable.

3. Enhancing Risk Profiling and Decision-Making

Better data leads to better decisions.

LLMs don’t just process structured inputs. That’s the difference.

They connect scattered information across documents, which gives underwriters a clearer view of the full picture. Risk assessment becomes less fragmented.

Still requires human oversight. But the starting point is much stronger.

Still not perfect. But noticeably better.

LLM Impact Areas

|

Function |

Before LLMs |

After LLMs |

|

Document Review |

Manual | Automated |

| Data Extraction | Partial |

Comprehensive |

|

Risk Analysis |

Limited context |

Context-rich |

Key Benefits of Private AI + LLM Underwriting

1. Faster Underwriting Decisions

Turnaround time improves, sometimes noticeably.

Applications move through the system with fewer delays. Cases don’t sit idle waiting for manual review unless they actually need it.

2. Improved Accuracy and Reduced Human Errors

Consistency tends to improve over time.

When the same logic is applied across cases, fewer details get missed. It doesn’t eliminate errors completely, but it reduces the obvious ones.

3. Enhanced Customer Experience

Customers usually don’t see the system behind the scenes. But they feel the outcome.

Faster responses. Fewer follow-ups. Less friction during onboarding.

That’s where the difference shows up.

4. Scalable and Efficient Operations

As volumes increase, systems don’t break.

Private AI setups scale without needing proportional increases in manpower. That operational shift doesn’t always look dramatic from the outside. But internally, it changes how teams handle volume.

Benefits at a Glance

|

Benefit |

What Changes |

|

Speed |

Quicker processing across cases |

| Accuracy | Fewer manual gaps |

| Experience | Smoother customer journey |

| Scalability |

Handles growth without strain |

Use Cases in Insurance

1. Automated Policy Issuance

For simpler cases, the process can move end-to-end with minimal intervention.

Not every application needs a manual review. Filtering those out makes a difference.

2. Risk Assessment and Scoring

This is where LLMs quietly add value.

They bring context from documents that would otherwise be ignored or skimmed through. That leads to more grounded risk scoring.

3. Claims Evaluation Support

Claims teams deal with volume.

Pre-processed summaries help them move faster without having to dig through every document from scratch.

4. Fraud Detection Insights

AI can highlight patterns that don’t immediately stand out.

Not always accurate. But often enough to be useful.

Key Use Cases Overview

|

Use Case |

What Changes |

Result |

|

Policy Issuance |

Less manual filtering | Faster onboarding |

| Risk Scoring | More contextual inputs |

Better pricing decisions |

|

Claims Support |

Pre-analysed data | Faster claim handling |

| Fraud Detection | Pattern recognition |

Early risk signals |

Challenges and Considerations

1. Implementation Complexity

This part tends to get underestimated.

Setting up a private AI is not just about deploying a model. It involves infrastructure decisions, data flow design, and internal alignment.

Things rarely work perfectly in the first iteration.

2. High Initial Investment

There is a cost barrier.

Infrastructure, tooling, skilled teams. It requires upfront commitment, and returns don’t always show up immediately.

That’s usually where hesitation comes in.

3. Need for Skilled AI Professionals

You need people who understand both AI and insurance.

That combination is still relatively rare.

4. Integration with Legacy Systems

Most insurers run on older systems.

Connecting new AI layers to legacy infrastructure can slow things down. This is where most projects get stuck.

Common Challenges

|

Challenge |

Reality |

| Complexity | Requires cross-team coordination |

| Cost | High initials spend |

| Talent | Limited availability |

| Legacy Systems |

Difficult integration |

Conclusion

Private AI and LLM underwriting are not just upgrades to existing systems. They change how underwriting works at a fundamental level. The need is becoming clearer. AI without control introduces risk. Control without intelligence limits value. Combining both is what makes this practical. For insurers, this isn’t about chasing trends. It’s about staying competitive in a space where speed, accuracy, and trust all matter at the same time. And more often than not, this is where the gap between early adopters and everyone else starts to widen.

FAQs

1. What is private AI in insurance underwriting?

It’s AI that runs within the insurer’s own secure environment, so sensitive data never leaves their control.

2. How do LLMs improve underwriting processes?

They read and summarise documents quickly, so underwriters spend less time processing and more time deciding.

3. Why is data privacy important in AI underwriting?

Because insurance data is highly sensitive. Even small risks can lead to compliance issues or loss of trust.

4. Can LLMs replace human underwriters?

Not really. They support the process, but final decisions still need human judgment.

5. What are the benefits of using private AI for underwriting?

Better security, faster processing, more consistent decisions, and easier compliance.

6. How much does it cost to implement a private AI?

Costs vary a lot. It depends on infrastructure, scale, and how customised the system needs to be.

7. Is LLM underwriting suitable for all types of insurance?

In most cases, yes. Especially where there’s a lot of document-heavy processing involved.

8. What challenges do insurers face with AI adoption?

Integration issues, cost, and talent gaps in governance.

9. How does private AI ensure regulatory compliance?

By keeping data within controlled environments, and allowing better tracking and auditing.

10. What is the future of AI in insurance underwriting?

More automation, better decision support, and tighter integration with core systems. Gradual, but steady.